Fully allocated delivery team

Works on your initiative only

Best for ongoing work

Choose KPS as your partner to integrate AI into your organization's products, systems, and workflows. We design and deliver AI integration solutions that fit real business operations and can be maintained over time. This includes internal workflows, customer-facing processes, and the system connections needed to support them.

Benefits

When AI remains isolated from the systems and processes that teams use daily, it rarely improves your workflows. A structured approach to AI integration helps close gaps between tools, reduce repetitive manual tasks, and make artificial intelligence easier to apply inside real workflows, so teams can reach operational KPIs, reduce employee workload, and use AI for practical execution instead of presentation value.

Connects AI capabilities to the platforms, data sources, and workflows already used across the business

Improves how current systems function without requiring full replacement

Reduces fragmentation caused by disconnected tools and isolated AI experiments

Automates repetitive tasks such as document handling, data entry, content processing, and internal routing

Frees teams from routine steps that slow down daily work

Reduces operational overhead without increasing headcount for the same volume of work

Brings AI-generated insights closer to the systems where teams already review information and act on it

Improves access to relevant data across business functions

Supports faster responses by reducing delays between analysis and action

Standardizes how information moves across workflows, teams, and systems

Reduces handoff gaps that create rework or missed steps

Makes execution more predictable across internal and customer-facing processes

Supports quicker responses in customer service, internal support, and information-heavy use cases

Improves how users access information, recommendations, and task-related guidance

Reduces wait times when AI is connected to live business data and service processes

Fits AI into the current architecture in a way that is easier to manage over time

Avoids adding another disconnected layer of tooling that becomes difficult to support

Creates a more stable foundation for extending AI use cases as business needs evolve

Need custom AI integration services for your operations?

KPS provides a structured way to evaluate where AI fits current processes, what the integration scope should include, and which use cases are worth prioritizing first.

Our Services

Integrating AI into your business involves work with data access, system boundaries, process adjustments, model selection, and ongoing support. At KPS, we guide you through each of the steps so you can move confidently from standalone tools to fully embedded AI solutions.

Defines where AI fits current operations, which use cases are realistic, and what constraints need to be addressed before implementation starts. This helps reduce misalignment between business goals, data availability, and technical scope.

Connects existing AI services and models to internal systems, customer-facing platforms, and daily workflows. This approach works well when businesses need faster adoption without building every capability from scratch.

Embeds generative AI into content, search, assistance, or knowledge-heavy processes where teams need faster outputs and easier access to information. The focus stays on making AI usable inside real execution flows rather than keeping it separate from day-to-day work.

Covers cases where standard tools do not fit the business logic, data structure, or operational requirements. This service supports more tailored implementations such as predictive models, classification, recommendation logic, or domain-specific automation.

Organizes, cleans, and connects business data so AI outputs can rely on information that is relevant, accessible, and usable across systems. Better data preparation reduces weak outputs and lowers friction during implementation.

Adjusts existing processes, integrations, or legacy flows so AI can be introduced without creating more fragmentation. This is often necessary when current systems were not designed to support automation or AI-driven decision points.

Prepares the technical environment needed to run AI reliably within the current architecture, including integration points, deployment setup, and security considerations. This helps reduce operational risk as AI moves from the pilot stage into regular use.

Supports the integration after launch through monitoring, adjustments, and incremental improvements as business needs change. This keeps the solution relevant as workflows evolve, data sources expand, and usage patterns become clearer.

Technology Stack

AI integration depends on how well different parts of the system work together: models, data, backend logic, and infrastructure. KPS uses tools and technologies that support stable integrations, predictable data flow, and long-term operation within your business systems.

Model providers: OpenAI, Azure OpenAI, Google Vertex AI, Azure AI Services

Core capabilities: text generation, summarization, classification, semantic search

Model access methods: APIs, SDKs, managed AI services

Languages and runtimes: Node.js, Python, Java, .NET

Frameworks: FastAPI, Django, NestJS, Express.js, ASP.NET Core

API and communication: REST, GraphQL, WebSockets, RabbitMQ, Kafka, event-driven architectures

Relational databases: PostgreSQL, MySQL, Microsoft SQL Server

NoSQL and in-memory stores: MongoDB, Redis, Elasticsearch

Data management practices: data modeling, caching strategies, schema migrations, data synchronization, Kafka, event-driven architectures

Cloud platforms: AWS, Google Cloud Platform, Microsoft Azure

Containerization and orchestration: Docker, Kubernetes, Helm

CI/CD and automation: GitHub Actions, GitLab CI, Jenkins, Azure DevOps

Security mechanisms: OAuth 2.0, OpenID Connect, role-based access control, data encryption at rest, data encryption in transit

Compliance and governance: GDPR-aware data handling, SOC 2 readiness, industry-specific compliance requirements

Identity and access tools: Auth0, Okta, Azure Active Directory

Backend testing tools: PyTest, Jest, JUnit, NUnit

API and integration testing: Postman, Playwright, Cypress

Quality practices: automated test pipelines, code reviews, static code analysis, performance testing

Monitoring and logging: Prometheus, Grafana, ELK Stack, Datadog

Error tracking and diagnostics: Sentry, New Relic

Operational visibility: system health monitoring, alerting, usage tracking

Enterprise systems: CRM systems, ERP systems, CMS platforms, analytics platforms

Productivity and communication tools: Slack, Microsoft Teams, Google Workspace

Storage and document services: AWS S3, Google Cloud Storage, Azure Blob Storage

Engagement Models

Engagement models for AI integration services

AI integration services can be structured in different formats depending on scope, internal capacity, and the level of delivery ownership required. KPS offers collaboration formats that fit both long-term integration initiatives and defined implementation tasks while keeping responsibilities clear.

Our Process

AI integration requires clear decisions around business logic, existing systems, data availability, and delivery priorities before implementation starts. The process below keeps AI integration focused on clear priorities from the first step.

STEP 1:

Product stakeholders, business analysts, and solution architects, together with the client, review the business goal, current workflows, existing systems, and operational constraints. This step clarifies where AI fits in the process, what problems it should address, and which limitations may affect implementation.

STEP 2:

Business analysts and solution architects define the integration scope, priority use cases, required data inputs, and expected outputs. Delivery managers also clarify dependencies, ownership, and the level of change needed across connected systems.

STEP 3:

Solution architects and senior engineers define the integration approach, system boundaries, API logic, and data flow structure. Technology choices, security requirements, and implementation priorities are aligned with the current architecture and delivery context.

STEP 4:

Backend engineers, AI engineers, and integration specialists connect models, services, and business systems within the agreed scope. They implement integration points, workflow logic, and supporting backend components. As a result, you get a testable MVP configured around selected processes and sample data, so the integration can be validated before wider rollout.

STEP 5:

QA engineers, backend engineers, and DevOps specialists validate functionality, data handling, and system behavior before release. This includes testing integration points, checking failure scenarios, and preparing the environment for controlled deployment.

STEP 6:

Support engineers, DevOps specialists, and delivery managers monitor usage, review system behavior, and identify needed adjustments after launch. This step supports further optimization as workflows change, usage expands, or new requirements appear.

STEP 1:

Product stakeholders, business analysts, and solution architects, together with the client, review the business goal, current workflows, existing systems, and operational constraints. This step clarifies where AI fits in the process, what problems it should address, and which limitations may affect implementation.

STEP 2:

Business analysts and solution architects define the integration scope, priority use cases, required data inputs, and expected outputs. Delivery managers also clarify dependencies, ownership, and the level of change needed across connected systems.

STEP 3:

Solution architects and senior engineers define the integration approach, system boundaries, API logic, and data flow structure. Technology choices, security requirements, and implementation priorities are aligned with the current architecture and delivery context.

STEP 4:

Backend engineers, AI engineers, and integration specialists connect models, services, and business systems within the agreed scope. They implement integration points, workflow logic, and supporting backend components. As a result, you get a testable MVP configured around selected processes and sample data, so the integration can be validated before wider rollout.

STEP 5:

QA engineers, backend engineers, and DevOps specialists validate functionality, data handling, and system behavior before release. This includes testing integration points, checking failure scenarios, and preparing the environment for controlled deployment.

STEP 6:

Support engineers, DevOps specialists, and delivery managers monitor usage, review system behavior, and identify needed adjustments after launch. This step supports further optimization as workflows change, usage expands, or new requirements appear.

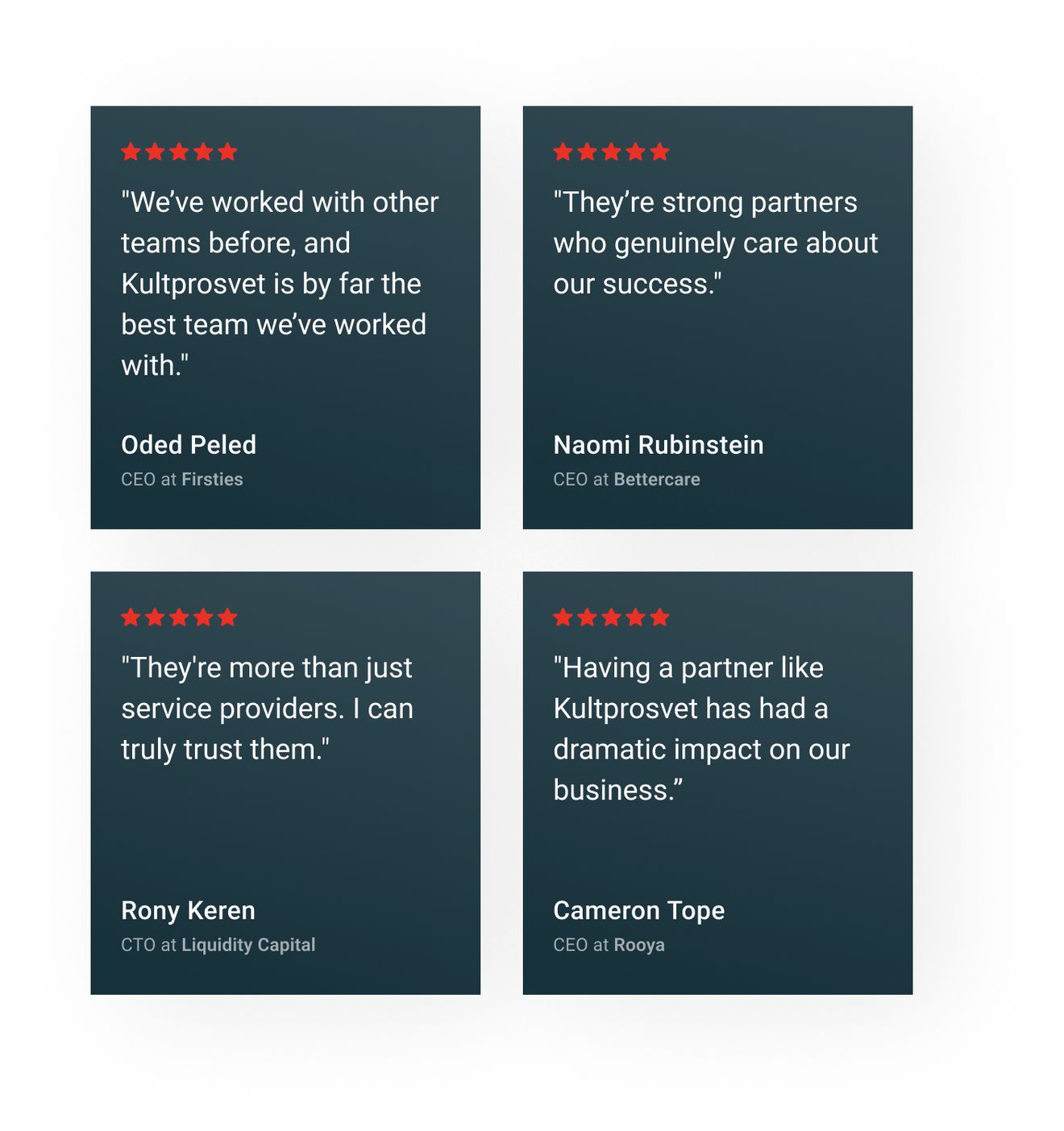

Clients' feedback

Feedback from clients reflects how the AI integration process develops over time, how delivery holds up in practice, and how the final result supports real business needs.

Since working with Kultprosvet, our customers are much happier with the product and its UX. They’ve added flexibility where the system was previously rigid, and they take full responsibility for the project, quickly fixing any issues that arise.

Naomi Rubinstein

Founder at BettercareThey are the best team we have ever worked with. The application increased the speed of receiving data by 4 times. Data loss was reduced by 10%. Ineffective tasks decreased by 7%. Response rate to customer requests increased by 23%. Our customers have seen significant increases in efficiency.

Aleksandr Podolyan

Technical Specialist & Product Manager., RDO UkraineKultprosvet has executed deliverables perfectly and provided us with a high-quality application. They’ve fulfilled our requirements, and the product perfectly fits our needs. The team’s development efforts have helped our business immensely.

Oleksandr Zainchukivskyi

Head of Technology, AMACOWe've had a very good experience with them. We trust them, and we'll continue to work with them. If we ever need something done, they always deliver.

Luc Lecorre

Luc Lecorre, Co-Investor, Luxury Handbag CompanyKultprosvet was highly knowledgeable, and they made us aware of some issues we hadn’t considered. They explained everything very clearly and helped us understand the broader scope of the work.

Yulia Goldenberg

PhD Researcher, Ben Gurion University of the NegevThe work is always delivered on time, and they are very fair about the pricing. Kultprosvet is transparent, and we know that we can trust them; we are never surprised by anything that comes up.

Cameron Tope

Founder, Rooya (Polysurance)OUR TEAM

Contact our client support team, which will provide you with a technical evaluation of your needs and give you details on the collaboration resources you will need.

Help

Since working with Kultprosvet, our customers are much happier with the product and its UX. They’ve added flexibility where the system was previously rigid, and they take full responsibility for the project, quickly fixing any issues that arise.