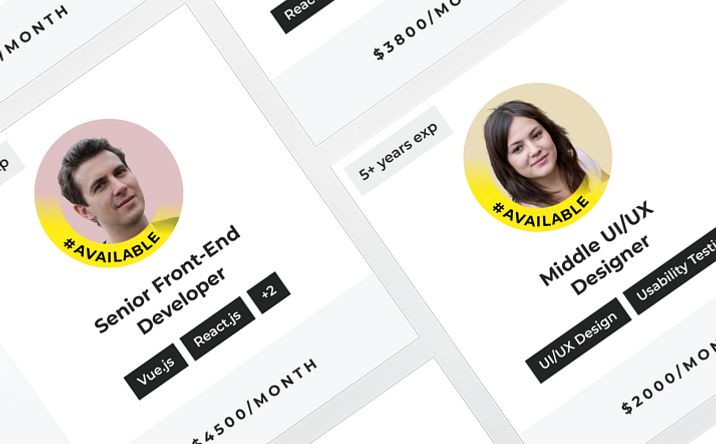

Fully allocated team

Works on your initiative

Builds domain knowledge

KPS develops GenAI-powered solutions, including AI assistants, copilots, knowledge-base systems, intelligent document processing tools, automated content generation tools, and workflow automation solutions built around your business processes, internal data, and operational needs. We build these solutions using such LLMs as GPT, Llama, Claude, Gemini, Mistral, and others to help your company speed up routine work, improve access to information, support faster responses, and reduce the cost of manual operations across daily processes.

Benefits

If your day-to-day operations depend too heavily on manual handling, repeated coordination, and tasks that pull people away from higher-value work, generative AI solutions can help make these processes easier to manage. For many businesses, this means faster access to information, more consistent execution, smarter resource allocation, and less team time spent on work that should not require constant human involvement.

Reduces time spent on repetitive content, support, and internal process tasks

Simplifies multi-step work that previously depended on manual coordination

Supports faster execution across teams without increasing routine workload

Improves access to internal documentation, policies, and operational information

Helps employees find relevant answers without searching across multiple systems

Reduces dependency on specific team members for recurring knowledge-based requests

Standardizes answers across support, operations, and internal communication flows

Reduces variation in how information is delivered to users or employees

Helps teams handle larger volumes of requests with more predictable output quality

Extends existing workflows without requiring proportional growth in headcount

Supports higher request volumes during growth, seasonal demand, or internal change

Makes repeated business processes easier to maintain as operations become more complex

Connects AI functionality with business tools, data sources, and operational platforms

Reduces friction between standalone AI use and real process execution

Supports adoption in environments where teams already depend on established systems

Enables gradual rollout based on specific use cases rather than broad disruption

Supports ongoing refinement as business needs, data, and usage patterns evolve

Reduces the risk of building AI features that look promising but do not fit daily work

Redirects team time from manual handling to core responsibilities

Reduces unnecessary human involvement in repeatable operational tasks

Helps control costs by using team capacity more deliberately

Planning to work with a generative AI app development company?

KPS helps define where generative AI can create practical value, what data and systems need to be involved, and how the solution can fit into your business processes without disrupting them.

Our Services

KPS covers core business areas, which will benefit from AI-driven acceleration into working solutions. We support planning, development, integration, customization, and post-launch improvement depending on the role generative AI is expected to play in your daily operations.

Assess business goals, process constraints, and implementation priorities before development begins. This helps focus effort on use cases that are realistic, relevant, and worth operationalizing.

Design and build generative AI applications around specific business tasks, user flows, and internal systems. The result is a solution that fits existing operations more closely than generic standalone tools.

Build copilots that assist employees with internal tasks, document handling, and process-specific work inside existing environments. This supports faster execution while keeping human review where accuracy or judgment still matters.

Develop AI agents that can retrieve information, handle requests, coordinate tasks, and support multi-step workflows. Such agents reduce manual involvement in repeatable processes and help teams manage growing operational load.

Create retrieval-based solutions connected to internal documents, support materials, policies, and structured business knowledge. Better access to relevant information improves response quality and reduces time spent searching across systems.

Adapt language models to domain-specific terminology, data, and task requirements. More relevant output makes the solution easier to use in everyday work and reduces the effort required for corrections.

Integrate generative AI functionality with business platforms, APIs, data sources, and internal tools. This makes the solution part of real workflows rather than a separate layer that teams rarely use.

Support launched solutions through monitoring, updates, refinement, and adjustments based on business feedback and usage patterns. Continuous improvement helps maintain quality as workflows, content, and requirements change.

AI engineers and solution architects develop agentic AI systems that can plan steps, use tools, retrieve information, and complete multi-stage tasks within defined business rules. These systems help reduce manual coordination in processes that require several connected actions.

Technology Stack

KPS works with a broad technology stack across AI, software engineering, data, cloud, and security layers, so the list below shows only the main tools and categories our specialists can support. The exact stack depends on the solution type, existing systems, data environment, and production requirements.

LLMs: OpenAI GPT, Claude, Gemini, Llama, Mistral, Cohere

Image and multimodal models: DALL-E, Stable Diffusion, MidJourney

Model types used based on task: text generation, summarization, question answering, image generation, multimodal processing

Frameworks: PyTorch, TensorFlow, Keras

Model and NLP libraries: Hugging Face Transformers, NVIDIA NeMo, AllenNLP, Fast.ai

Applied AI components: NLP pipelines, transformer-based architectures, model fine-tuning workflows

LLM orchestration: LangChain

Application layers: agent workflows, copilot logic, prompt pipelines, multi-step reasoning flows

Implementation patterns: conversational AI, retrieval flows, task routing, multi-turn interactions

Databases: PostgreSQL, MySQL, MongoDB

Search and caching: Elasticsearch, Redis

Retrieval components: document ingestion, embedding pipelines, metadata filtering, RAG-based access to internal knowledge

Languages and runtimes: Python, Node.js, Java, .NET, Go

Frameworks: FastAPI, Django, Flask, NestJS, Express.js

API and communication: REST APIs, GraphQL, WebSockets, message queues, event-driven integrations

Cloud platforms: AWS, Google Cloud Platform, Microsoft Azure

Containers and orchestration: Docker, Kubernetes

Deployment support: CI/CD pipelines, model hosting, scalable inference environments, environment isolation

Observability: logging, error tracking, usage monitoring, diagnostics

Optimization areas: latency reduction, prompt refinement, output evaluation, cost and performance tuning

Operational support: post-launch monitoring, model updates, workflow adjustments, quality improvement cycles

Access and identity: role-based access control, authentication layers, API security

Data protection: encryption at rest, encryption in transit, secure storage practices

Governance considerations: GDPR-aware handling, internal data boundaries, controlled access to business content

Engagement Models

Work formats from KPS for generative AI implementation

Generative AI initiatives vary in scope, ownership, and delivery complexity. We offer you collaboration formats that fit different levels of control, internal involvement, and project definition.

Our Process

A workable AI solution is shaped by several factors at once: business context, data readiness, model behavior, and integration with existing systems. Our process is built to keep these parts aligned from planning through post-launch improvement:

STEP 1:

Product stakeholders, business analysts, and AI consultants clarify expectations with the client’s decision-makers and in-house team: what the solution should do, who will use it, and which business problem it should address first. This step includes scope definition, process review, and early feasibility assessment, so the project starts from a realistic operational need.

STEP 2:

Data engineers, AI engineers, and solution architects review the data sources, document flows, systems, and process dependencies the solution will rely on. Their work helps identify data gaps, integration constraints, and content quality issues before implementation moves forward.

STEP 3:

Solution architects and senior engineers define the model approach, system structure, integration logic, and security boundaries for the solution. This includes choosing how the AI layer will interact with internal systems, user flows, and non-AI-dependent operational processes.

STEP 4:

AI engineers, backend developers, and frontend developers build the solution in iterations, while QA specialists validate outputs, flows, and system behavior along the way. Incremental delivery makes it easier to test assumptions early and adjust prompts, logic, or interfaces before release.

STEP 5:

QA engineers, DevOps specialists, and developers prepare the solution for production, validate reliability, and coordinate deployment into the target environment. At this stage, the solution is integrated with existing systems and checked for compatibility, stability, and controlled rollout.

STEP 6:

Support specialists monitor usage, review output quality, and refine the solution after launch. Ongoing updates help the system stay useful as workflows, content, and business requirements change over time.

STEP 1:

Product stakeholders, business analysts, and AI consultants clarify expectations with the client’s decision-makers and in-house team: what the solution should do, who will use it, and which business problem it should address first. This step includes scope definition, process review, and early feasibility assessment, so the project starts from a realistic operational need.

STEP 2:

Data engineers, AI engineers, and solution architects review the data sources, document flows, systems, and process dependencies the solution will rely on. Their work helps identify data gaps, integration constraints, and content quality issues before implementation moves forward.

STEP 3:

Solution architects and senior engineers define the model approach, system structure, integration logic, and security boundaries for the solution. This includes choosing how the AI layer will interact with internal systems, user flows, and non-AI-dependent operational processes.

STEP 4:

AI engineers, backend developers, and frontend developers build the solution in iterations, while QA specialists validate outputs, flows, and system behavior along the way. Incremental delivery makes it easier to test assumptions early and adjust prompts, logic, or interfaces before release.

STEP 5:

QA engineers, DevOps specialists, and developers prepare the solution for production, validate reliability, and coordinate deployment into the target environment. At this stage, the solution is integrated with existing systems and checked for compatibility, stability, and controlled rollout.

STEP 6:

Support specialists monitor usage, review output quality, and refine the solution after launch. Ongoing updates help the system stay useful as workflows, content, and business requirements change over time.

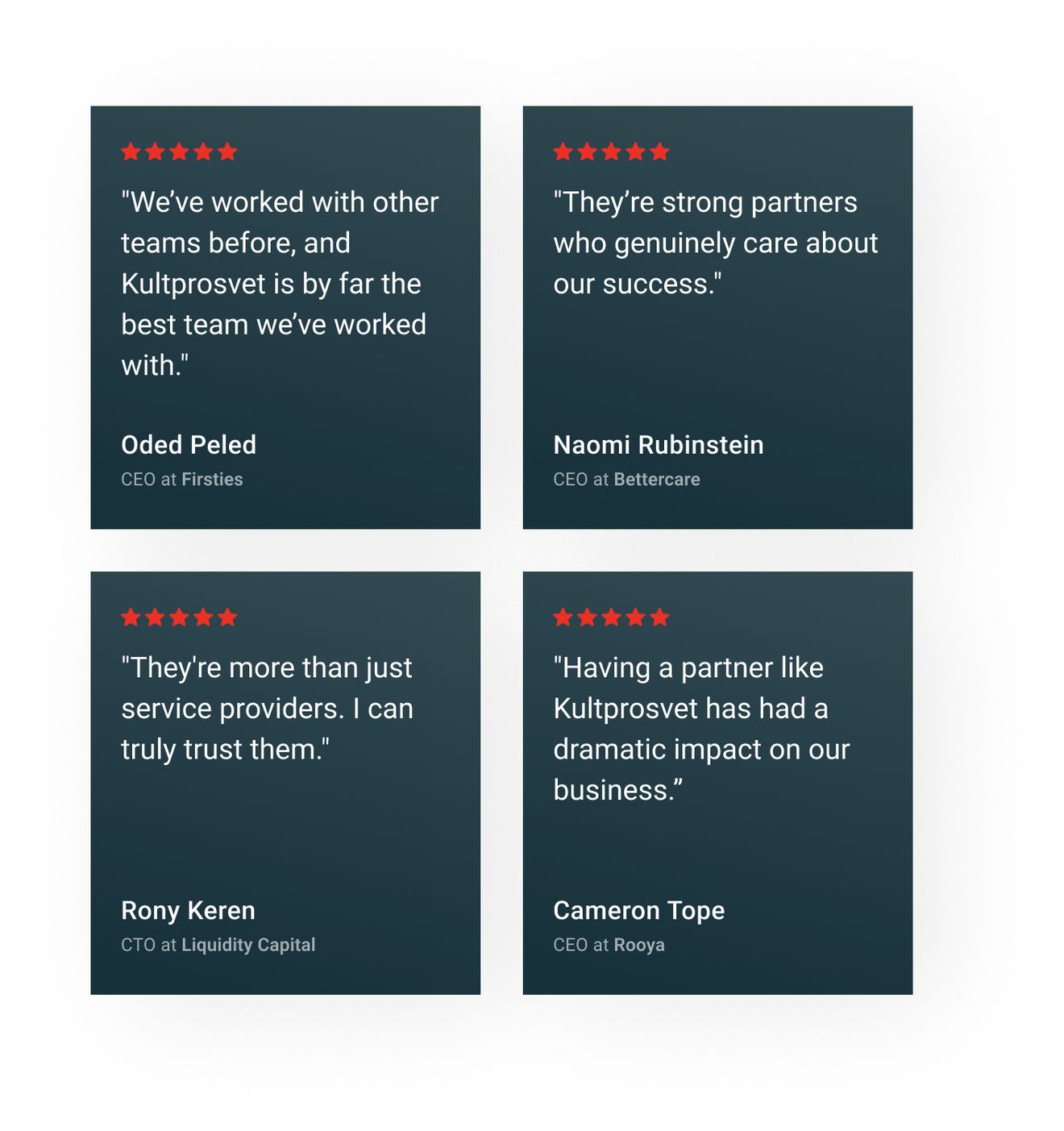

Clients' feedback

What clients notice most is how the solution works in practice, how easily teams adopt it, and the value it delivers over time.

Since working with Kultprosvet, our customers are much happier with the product and its UX. They’ve added flexibility where the system was previously rigid, and they take full responsibility for the project, quickly fixing any issues that arise.

Naomi Rubinstein

Founder at BettercareThey are the best team we have ever worked with. The application increased the speed of receiving data by 4 times. Data loss was reduced by 10%. Ineffective tasks decreased by 7%. Response rate to customer requests increased by 23%. Our customers have seen significant increases in efficiency.

Aleksandr Podolyan

Technical Specialist & Product Manager., RDO UkraineKultprosvet has executed deliverables perfectly and provided us with a high-quality application. They’ve fulfilled our requirements, and the product perfectly fits our needs. The team’s development efforts have helped our business immensely.

Oleksandr Zainchukivskyi

Head of Technology, AMACOWe've had a very good experience with them. We trust them, and we'll continue to work with them. If we ever need something done, they always deliver.

Luc Lecorre

Luc Lecorre, Co-Investor, Luxury Handbag CompanyKultprosvet was highly knowledgeable, and they made us aware of some issues we hadn’t considered. They explained everything very clearly and helped us understand the broader scope of the work.

Yulia Goldenberg

PhD Researcher, Ben Gurion University of the NegevThe work is always delivered on time, and they are very fair about the pricing. Kultprosvet is transparent, and we know that we can trust them; we are never surprised by anything that comes up.

Cameron Tope

Founder, Rooya (Polysurance)OUR TEAM

Contact our client support team, which will provide you with a technical evaluation of your needs and give you details on the collaboration resources you will need.

Help

Since working with Kultprosvet, our customers are much happier with the product and its UX. They’ve added flexibility where the system was previously rigid, and they take full responsibility for the project, quickly fixing any issues that arise.