Fully allocated QA team

Long-term product knowledge grows

Best for ongoing delivery

KPS provides quality assurance services throughout the whole SDLC process to reduce release risk, improve software reliability, and maintain stable product quality as delivery becomes more complex. Our teams help find, eliminate, and prevent potential defects before release, improve test coverage for critical workflows, and build QA processes that support clear, consistent release decisions.

Benefits

As release activity increases alongside product development, quality issues begin to affect planning, team coordination, and post-release workload. A strong QA setup helps reduce production issues, lower the cost of avoidable fixes, use specialist time more efficiently, and keep testing practices consistent as products and teams grow.

Reduces the number of defects in production

Improves confidence before release

Keeps delivery more predictable over time

Reveals issues earlier in the delivery cycle

Makes recurring quality problems easier to track

Reduces the effort needed for late fixes

Strengthens validation of critical workflows

Expands coverage for new features and changes

Reduces gaps in important testing areas

Makes release decisions clearer for teams

Reduces uncertainty before deployment

Keeps testing aligned with delivery priorities

Establishes clearer testing routines across releases

Reduces reliance on ad hoc checks

Keeps quality practices more consistent as teams grow

Improves the stability of daily product use

Reduces disruption caused by avoidable issues

Protects important user flows during change

Considering software quality assurance services for your product?

KPS helps define the right QA approach and set clear QA practices, so teams can validate changes consistently without extra coordination at every step.

Our Services

Our quality assurance services cover different stages of product delivery, depending on how releases are planned, how testing is currently organized, and where quality risks appear most often. Test documentation, testing scripts, and core QA practices are also recorded in a structured way, so the team can keep using them later with internal resources or another vendor.

Used to check features, user flows, and system behavior in a structured way. It is especially important in areas where detailed human review is needed to confirm expected results and detect defects that automated checks may miss.

Checks whether new changes have affected existing functionality. It is important for products with frequent releases, shared components, or critical workflows that need to stay stable across updates.

Created for repeatable scenarios, critical user journeys, and release validation. This approach strengthens test automation and makes quality control more consistent as delivery speed increases.

Shows how validation is planned, executed, and documented across the delivery cycle. It helps identify weak points, unclear responsibilities, and gaps that make releases harder to control.

Confirm that new functionality, fixes, and integrations are ready for deployment. This gives teams a clearer basis for release decisions and supports more reliable QA testing before launch.

Prepared to make validation more structured and repeatable. Clear documentation also helps teams keep testing consistent across releases and reduces reliance on informal knowledge.

Checks how different system parts work together after changes are introduced. It helps detect issues in data exchange, service interaction, and connected workflows before they affect production use.

Used after builds or deployments to confirm that the main functions work at a basic level. It gives teams a quick way to detect major issues early and supports a more stable software development lifecycle.

Technology Stack

The set of quality assurance tools must be appropriate for the product, the scope of testing, and the environments in which testing is conducted. KPS works with testing frameworks, automation tools, and related platforms that are suitable for various release models, system types, and quality control requirements.

Model providers: OpenAI, Anthropic Automation frameworks: Playwright, Cypress, Selenium, WebdriverIO

Automated UI testing: Playwright, Cypress, Selenium

Mobile automation: Appium

API testing tools: Postman, Swagger, SoapUI, Insomnia, REST Assured

Service validation: REST APIs, GraphQL APIs, microservice communication

Data exchange checks: request validation, response validation, schema checks

Performance testing tools: JMeter, k6, Gatling, LoadRunner, Locust

Load testing scenarios: concurrent users, traffic spikes, response time checks

System behavior checks: throughput, latency, stability under load

Test management platforms: TestRail, Zephyr, Xray, Qase, TestLink

Documentation tools: Confluence, Notion, Jira

Testing assets: test cases, checklists, bug reports, validation notes

CI/CD tools: GitHub Actions, GitLab CI, Jenkins, Azure DevOps, CircleCI

Release validation support: build checks, smoke tests, regression runs

Deployment workflows: continuous integration, release pipelines, test execution in staging

Cross-browser testing tools: BrowserStack, Sauce Labs, LambdaTest

Device coverage: desktop browsers, tablets, mobile devices, real device labs

Compatibility testing areas: browser behavior, screen sizes, operating systems

Security testing tools: OWASP ZAP, Burp Suite, Snyk, SonarQube

Validation areas: vulnerability checks, insecure dependencies, common web security risks

Compliance support: GDPR-aware testing, access control checks, data handling review

Accessibility testing tools: axe, Lighthouse, Wave, NVDA, VoiceOver

Cloud and environment support: AWS, Google Cloud Platform, Microsoft Azure

Environment technologies: Docker, Kubernetes, test environments, staging environments

Engagement Models

Engagement models for software QA consulting services

QA support can be structured in different ways depending on release frequency, testing scope, and the level of ownership your team wants to keep. KPS offers flexible work formats that fit both ongoing product delivery and clearly defined testing needs.

Our Process

QA work requires a clear workflow, well-defined responsibilities, and consistent verification steps throughout the SDLC. Our process is built around 6 practical steps that help teams understand exactly what will be tested, how quality risks will be addressed, and how testing aligns with release decisions.

STEP 1:

Product stakeholders, delivery managers, and QA leads review the release scope, critical workflows, recent changes, known risk areas, and product goals related to user experience. This step sets testing priorities, highlights the areas with the highest delivery impact, and gives the team a clearer basis for planning validation.

STEP 2:

QA leads and QA engineers define the testing scope, coverage, acceptance criteria, and validation approach for the release. The roadmap covers functional checks, regression priorities, environment needs, and the activities required to support reliable release decisions.

STEP 3:

QA engineers prepare test cases, checklists, test data, and the environments required for execution. When automated checks are included in the testing scope, automation engineers update existing scripts or prepare new ones for repeatable validation.

STEP 4:

The QA team runs planned checks across critical user flows, changed functionality, integrations, and risk areas. Findings are documented with clear reproduction steps, expected behavior, actual behavior, and release impact so developers can address issues without delay.

STEP 5:

QA engineers, developers, and delivery leads review detected issues based on severity, business impact, and release timing. After fixes are applied, the QA team rechecks affected areas and summarizes open risks, blocked items, and remaining concerns that may affect release quality.

STEP 6:

After deployment, QA specialists verify critical production flows and review whether release-related issues appeared in live use. The results are used to confirm stability, document missed gaps, and adjust future testing priorities where needed.

STEP 1:

Product stakeholders, delivery managers, and QA leads review the release scope, critical workflows, recent changes, known risk areas, and product goals related to user experience. This step sets testing priorities, highlights the areas with the highest delivery impact, and gives the team a clearer basis for planning validation.

STEP 2:

QA leads and QA engineers define the testing scope, coverage, acceptance criteria, and validation approach for the release. The roadmap covers functional checks, regression priorities, environment needs, and the activities required to support reliable release decisions.

STEP 3:

QA engineers prepare test cases, checklists, test data, and the environments required for execution. When automated checks are included in the testing scope, automation engineers update existing scripts or prepare new ones for repeatable validation.

STEP 4:

The QA team runs planned checks across critical user flows, changed functionality, integrations, and risk areas. Findings are documented with clear reproduction steps, expected behavior, actual behavior, and release impact so developers can address issues without delay.

STEP 5:

QA engineers, developers, and delivery leads review detected issues based on severity, business impact, and release timing. After fixes are applied, the QA team rechecks affected areas and summarizes open risks, blocked items, and remaining concerns that may affect release quality.

STEP 6:

After deployment, QA specialists verify critical production flows and review whether release-related issues appeared in live use. The results are used to confirm stability, document missed gaps, and adjust future testing priorities where needed.

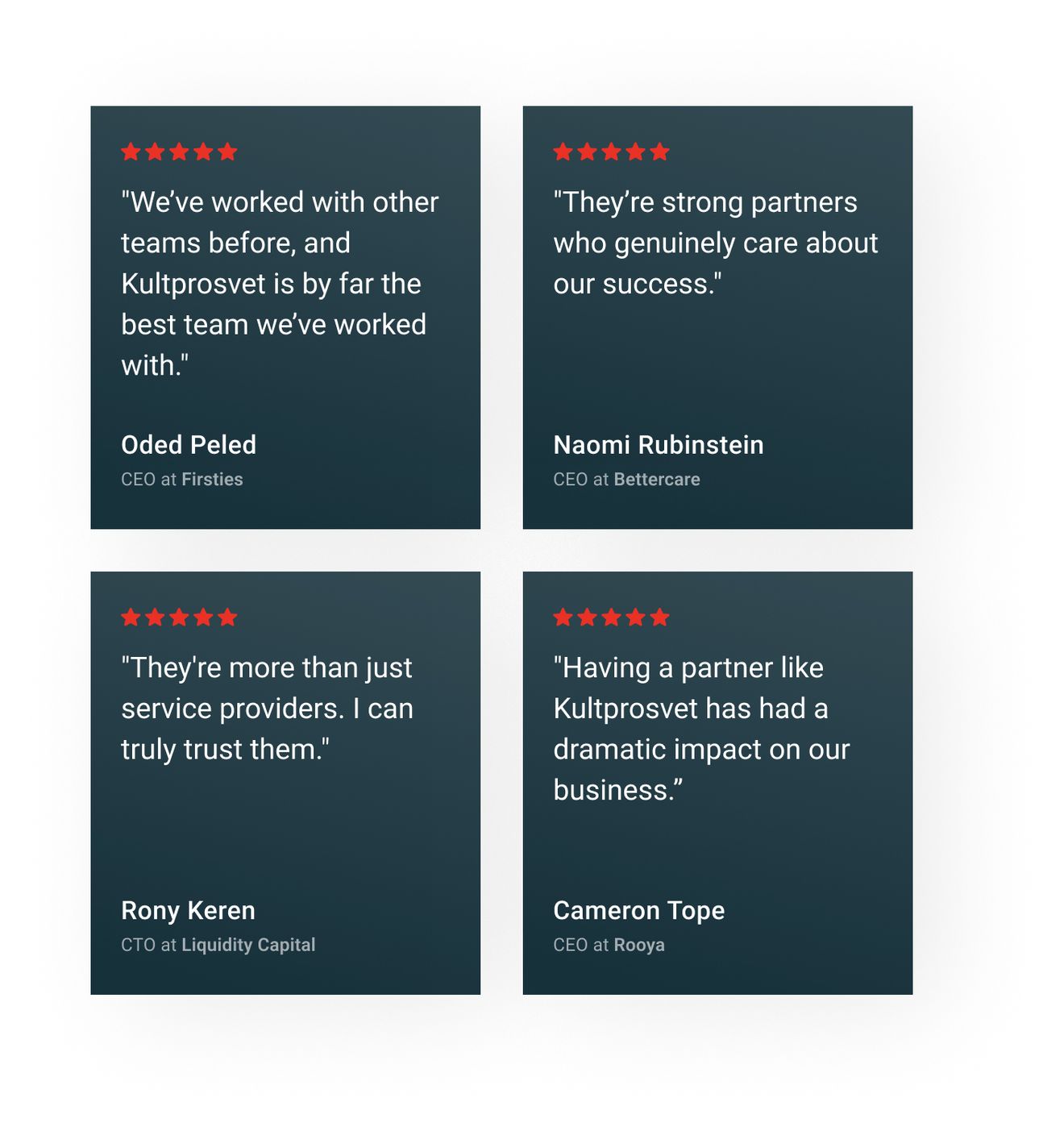

Clients' feedback

What matters most is how QA affects the release process once it is in place. The feedback of our clients reflects the impact on defect rates, release predictability, and team coordination across delivery cycles.

Since working with Kultprosvet, our customers are much happier with the product and its UX. They’ve added flexibility where the system was previously rigid, and they take full responsibility for the project, quickly fixing any issues that arise.

Naomi Rubinstein

Founder at BettercareThey are the best team we have ever worked with. The application increased the speed of receiving data by 4 times. Data loss was reduced by 10%. Ineffective tasks decreased by 7%. Response rate to customer requests increased by 23%. Our customers have seen significant increases in efficiency.

Aleksandr Podolyan

Technical Specialist & Product Manager., RDO UkraineKultprosvet has executed deliverables perfectly and provided us with a high-quality application. They’ve fulfilled our requirements, and the product perfectly fits our needs. The team’s development efforts have helped our business immensely.

Oleksandr Zainchukivskyi

Head of Technology, AMACOWe've had a very good experience with them. We trust them, and we'll continue to work with them. If we ever need something done, they always deliver.

Luc Lecorre

Luc Lecorre, Co-Investor, Luxury Handbag CompanyKultprosvet was highly knowledgeable, and they made us aware of some issues we hadn’t considered. They explained everything very clearly and helped us understand the broader scope of the work.

Yulia Goldenberg

PhD Researcher, Ben Gurion University of the NegevThe work is always delivered on time, and they are very fair about the pricing. Kultprosvet is transparent, and we know that we can trust them; we are never surprised by anything that comes up.

Cameron Tope

Founder, Rooya (Polysurance)OUR TEAM

Contact our client support team, which will provide you with a technical evaluation of your needs and give you details on the collaboration resources you will need.

Help

Since working with Kultprosvet, our customers are much happier with the product and its UX. They’ve added flexibility where the system was previously rigid, and they take full responsibility for the project, quickly fixing any issues that arise.